React SSR Framework Showdown: TanStack Start, React Router, and Next.js Under Load

Performance benchmarks capture a moment, not a final judgment. Results depend on a specific workload, scale, and constraints; they do not rank frameworks by value. Next.js stands out for its widespread adoption, strong compatibility, and vast ecosystem trusted by millions. TanStack, as a newcomer, made bold architectural choices. React Router is positioned differently along the maturity curve. Each framework wins in its own context.

The numbers matter less than the response: every team addressed our shared data and delivered fixes. This collaboration with open data, shared flamegraphs, and upstream fixes makes Node.js a safe, long-term choice for enterprise teams.

We are updating the results after readers pointed out some inconsistencies in the code. We will update those numbers soon.

TL;DR

With help from Claude Code, we built the same eCommerce app in three SSR frameworks and tested them at 1,000 requests per second on AWS EKS. We ran each framework both on Watt and directly on Kubernetes.

The results revealed big performance differences and highlighted a few key themes:

-

Running Node services on Watt improves average latency.

-

The TanStack team is doing excellent work. Their framework outperformed the others we tested by a wide margin.

-

The Next.js team has made impressive performance improvements. Upgrading from v15 to v16 canary more than doubled throughput and reduced latency by six times. Their collaboration also led to a 75% speedup in React’s RSC deserialization, which benefits everyone using React.

Both the TanStack and Next.js team used platformatic/flame to find and resolve critical performance bottlenecks the benchmark uncovered - more on that below.

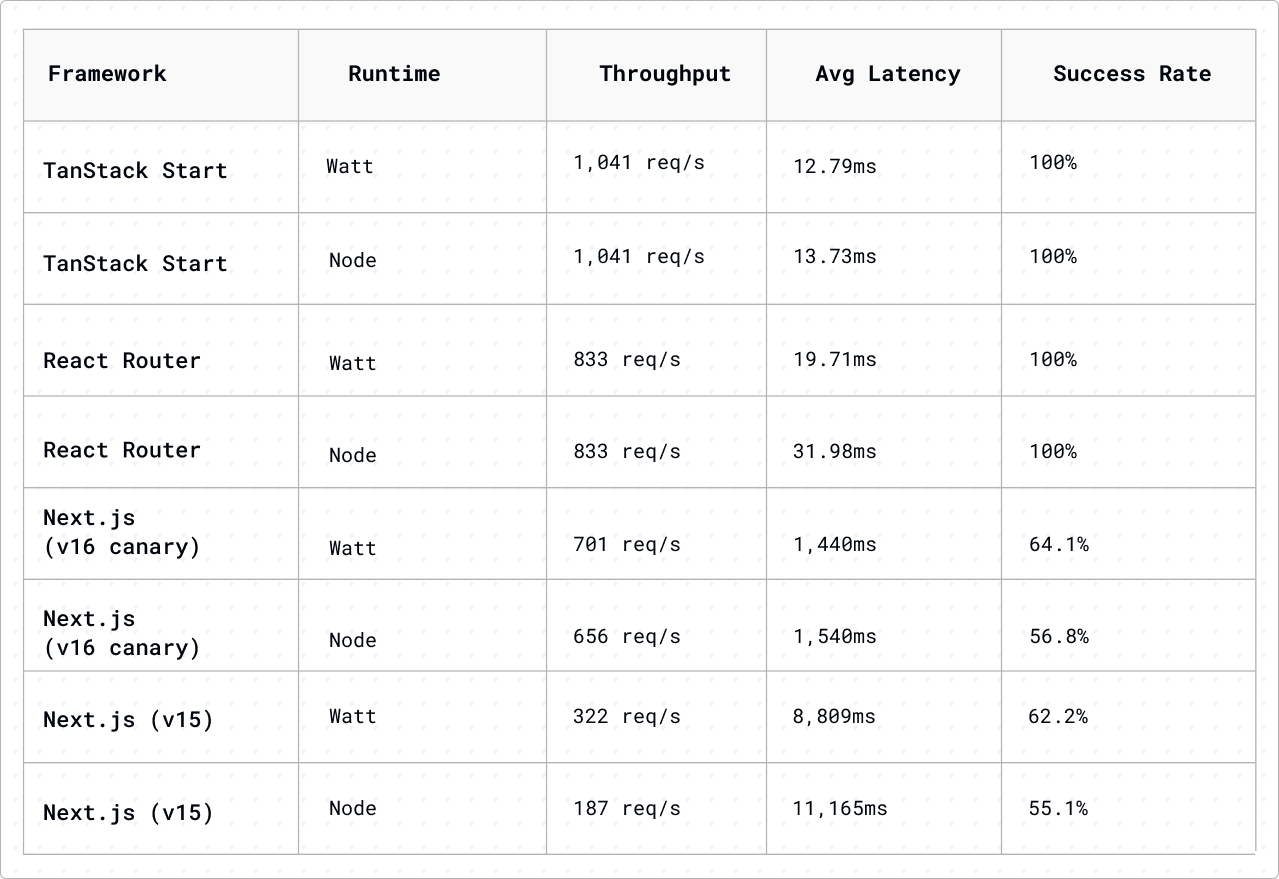

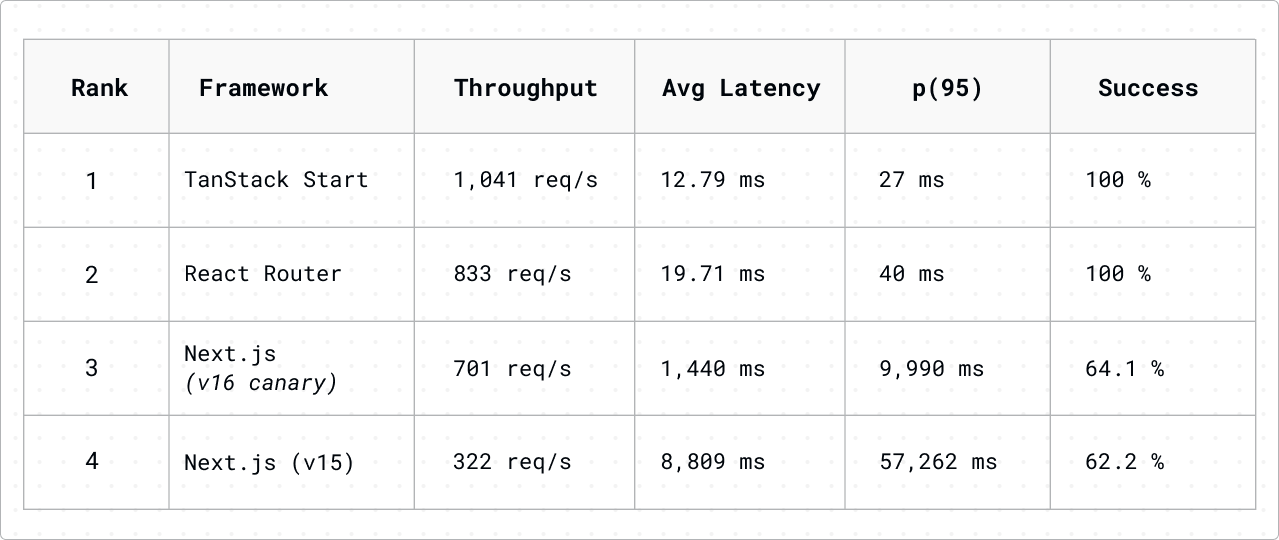

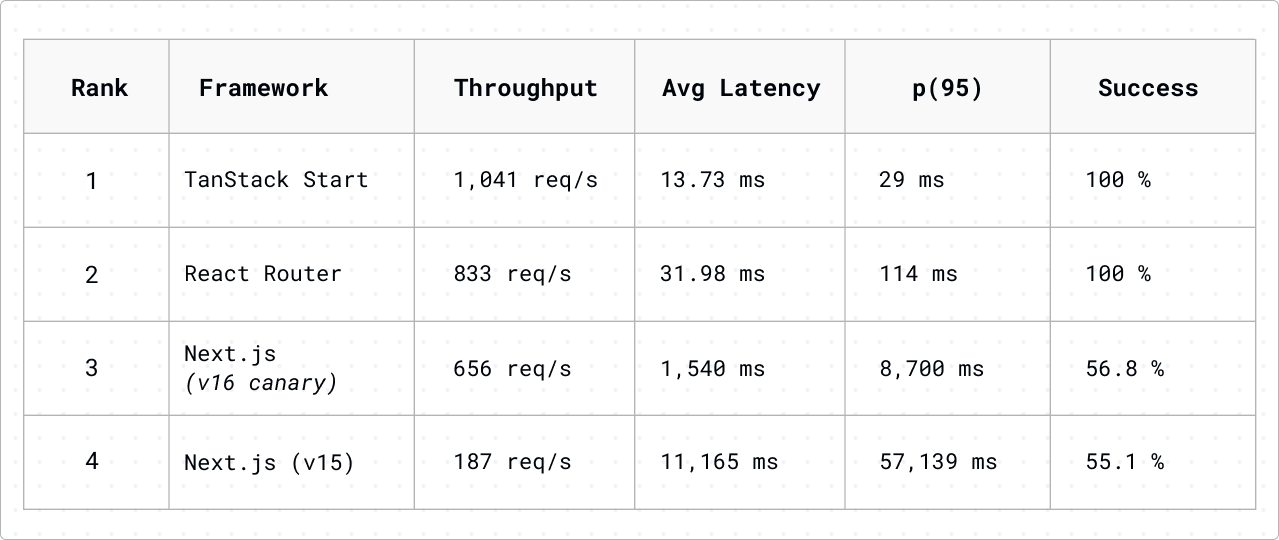

TanStack Start outperformed React Router by 25% in throughput and had 35% lower latency. Both frameworks achieved a 100% success rate, meaning every request got an HTTP 200 response within our 10-second timeout. This strict definition makes the comparison fair and matches real-world SLA expectations. Next.js struggled under our benchmark load, but upgrading from v15.5.5 to v16.2.0-canary.66 more than doubled its throughput (from 322 to 701 requests per second) and reduced average latency by six times.

To mirror common enterprise eCommerce scenarios, no caching was used in this test, as it’s often avoided due to aggressive personalization and A/B testing. In many large-scale e-commerce deployments, personalization strategies ensure that individual user views have minimal overlap, often less than 5%,which means that cache hits provide minimal benefit compared to the invalidation overhead. This explicit trade-off reflects real-world scenarios, where companies choose to prioritize dynamic user experiences over the potential gains from caching.

Collaboration note: We shared benchmark data and flamegraphs (via platformatic/flame) with both the TanStack and Next.js teams. The TanStack team fixed a critical bottleneck, delivering a 252x improvement in response times. The Next.js team’s Tim Neutkens used our flamegraphs to identify a JSON.parse reviver overhead in React Server Components, resulting in a 75% speedup in RSC deserialization merged into React itself.

While we run these benchmarks on a canary release of Next.js, all the advantages are part of Next.js 16.2.0, which is coming out very soon.

The Challenge: Apples-to-Apples Framework Comparison

Comparing SSR performance (or performance generally) across frameworks is notoriously tricky because teams tend to only write and deploy their apps to a single framework, so it’s rare to get a reasonable “like-for-like” comparison.

Luckily for us, we live in an era where writing code is as cheap as however many tokens it costs to generate your favorite LLM. So we made 3 (more-or-less) identical eCommerce sample applications with the help of our dear friend Claudio (feel free to check out the code for yourself here).

The Application: CardMarket

For these benchmarks, we built a trading card marketplace app, similar to a simpler version of TCGPlayer or CardMarket. The data model includes 5 games (Pokémon, Magic: The Gathering, Yu-Gi-Oh!, Digimon, and One Piece), 50 card sets (10 per game), 10,000 cards (200 per set), 100 sellers with ratings and locations, and 50,000 listings with prices, conditions, and quantities.

The app includes several types of pages and routes to create a realistic e-commerce experience, all generated by Claude Code:

-

The homepage shows featured games, trending cards, and new releases.

-

There’s a search page with full-text search, filtering, and pagination.

-

Game detail pages show info about each game and its sets, while set detail pages list cards with pagination.

-

Card detail pages display card info and seller listings.

-

The sellers’ list page shows all sellers with their ratings, and each seller has a profile and listings page.

-

There’s also a cart page with a static shopping cart.

We made several design choices to keep the implementations consistent:

-

All data comes from JSON files, and every framework uses the same data.

-

We added a random 1-5ms delay to simulate real database latency.

-

Every route uses full SSR with no client-side data fetching.

-

All versions share the same UI components, layouts, and Tailwind CSS styling.

The Frameworks

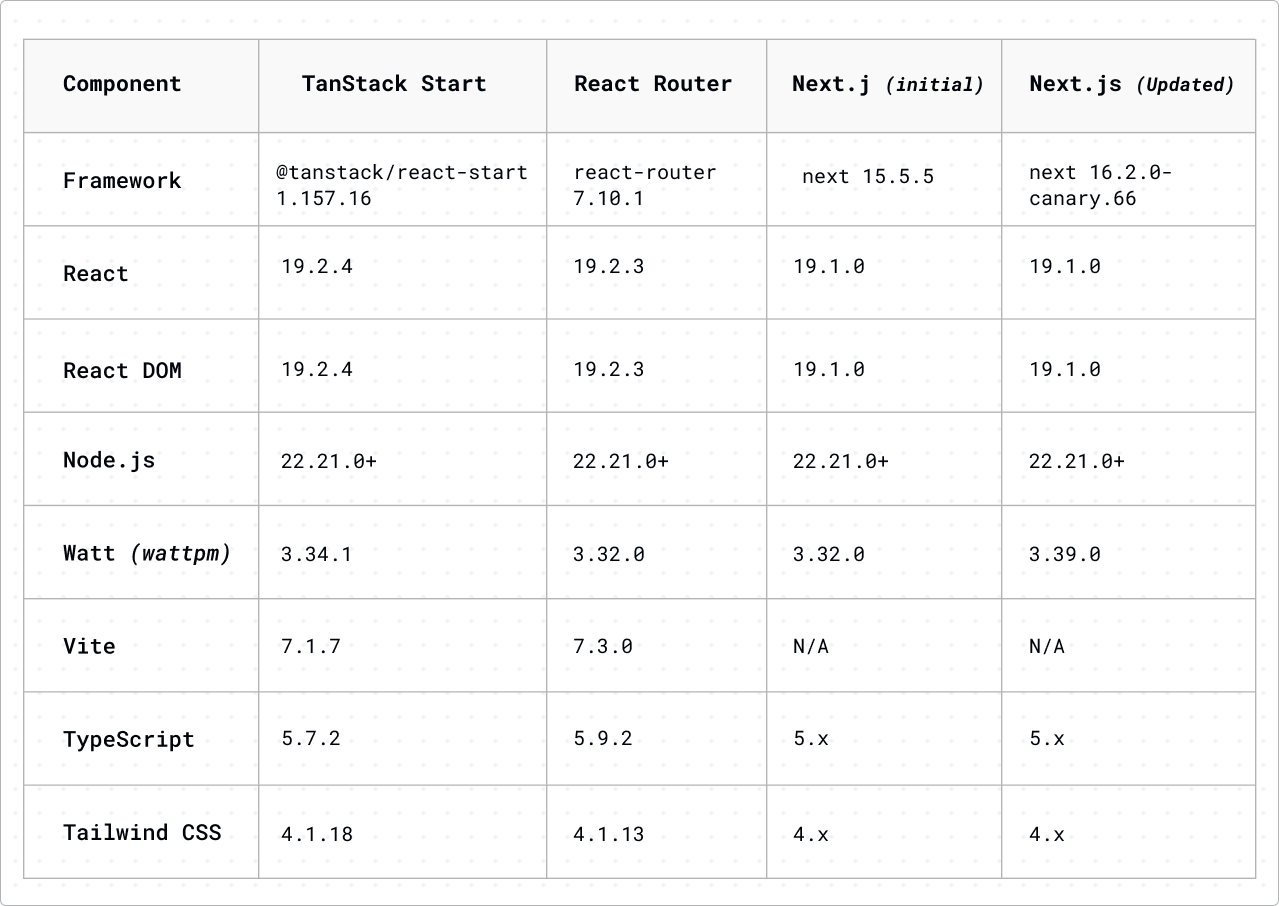

We implemented this application in three frameworks:

-

TanStack Start (v1.157.16) - The newest entrant, built on TanStack Router with Vite for SSR

-

React Router (v7) - The classic routing library, now with first-class SSR support.

-

Next.js (v15, updated to v16 canary) - The established leader in React SSR

Each implementation uses the framework’s idiomatic patterns:

-

TanStack Start: createFileRoute with loader functions

-

React Router: Route modules with loader exports

-

Next.js: App Router with Server Components

The Runtimes

For each framework, we tested two runtime configurations:

-

Node.js - Single-threaded, 6 pods with 1 CPU allocated for each

-

Watt - Multi-worker with SO_REUSEPORT, 3 pods with 2 CPUs allocated, with 2 workers per pod to use those 6 CPUs to the fullest

All configurations received identical total CPU allocation (6 cores) for fair comparison.

Test Methodology

Infrastructure

-

EKS Cluster: 4 nodes running m5.2xlarge instances (8 vCPUs, 32GB RAM each)

-

Load Testing Instance: c7gn.2xlarge (8 vCPUs, 16GB RAM, network-optimized)

-

Region: us-west-2

-

Load Testing Tool: Grafana k6

Software Versions

All versions are locked in package.json for reproducible benchmarks:

Load Test Configuration

Each test followed this protocol:

-

NLB Warm-up: 60 seconds ramping from 10 to 500 req/s

-

Pre-test Warm-up: 20 seconds at moderate load

-

Cool-down: 60 seconds before the main test

-

Main Test: 60 seconds ramp-up to 1,000 req/s, then 120 seconds sustained

-

Between Tests: 480 seconds cooldown

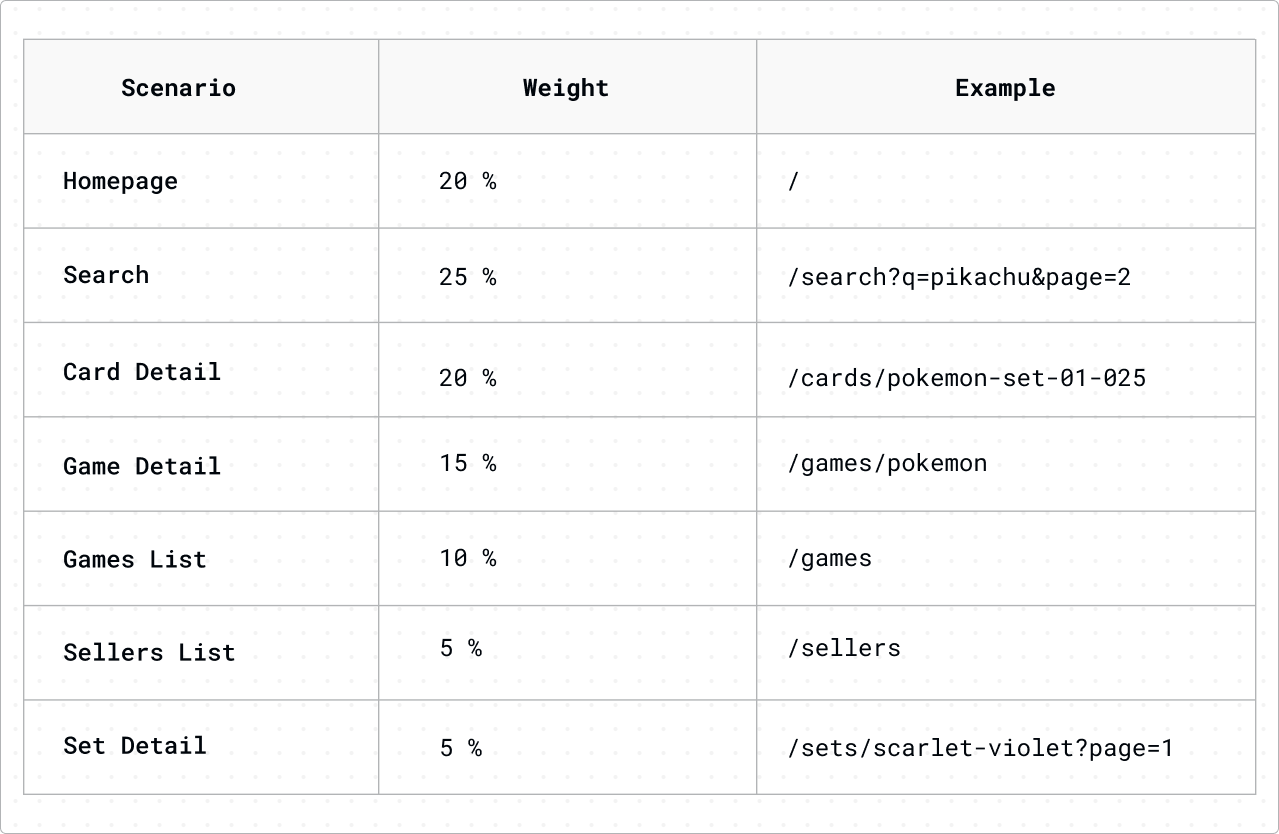

Realistic Traffic Distribution

The load test simulated realistic e-commerce traffic patterns:

Results

TanStack Start: The Performance Leader

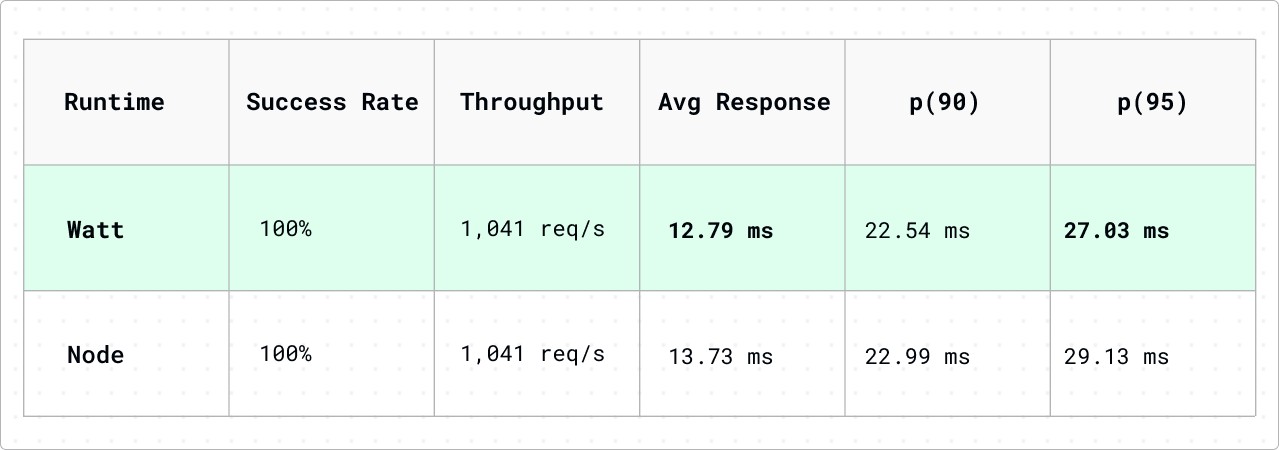

After Update (v1.157.16)

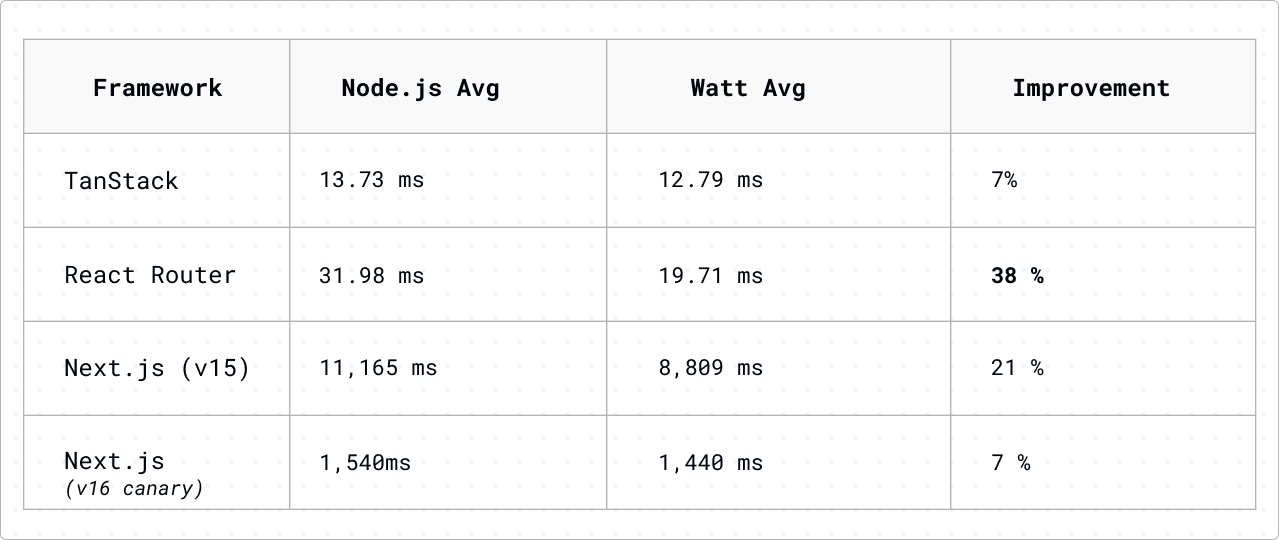

TanStack Start delivered exceptional performance, the highest throughput and lowest latency of all frameworks tested. With Watt, average response times stayed under 13ms even at 1,000 requests per second.

React Router: Solid and Reliable

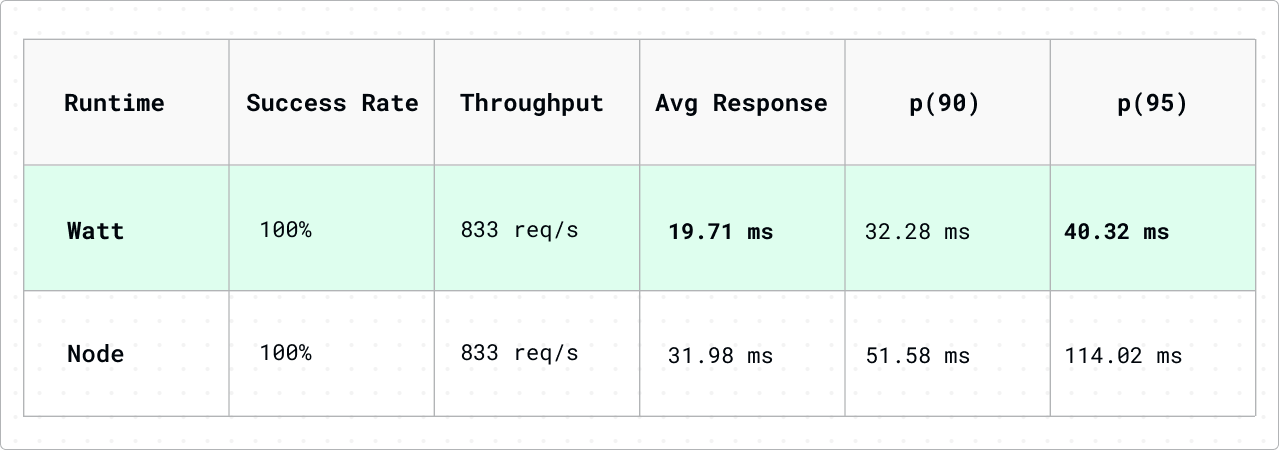

React Router managed the load well and had zero failures. Using Watt made response times 38% faster compared to standalone Node.js.

Next.js: Struggling Under Load, but Making Progress

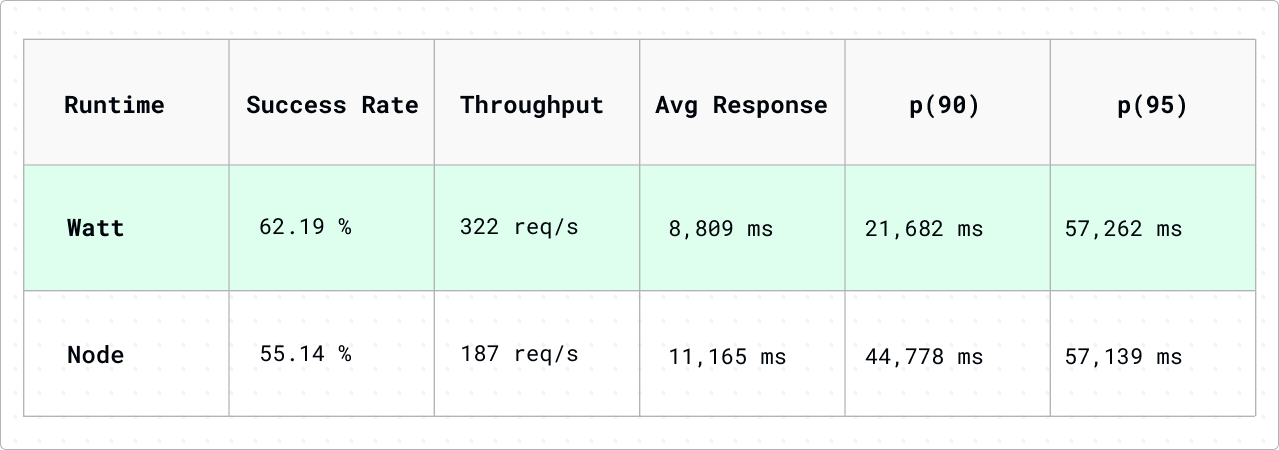

Initial Benchmark (Next.js 15.5.5, Watt 3.32.0)

Next.js couldn’t handle 1,000 requests per second. Response times averaged 8 to 11 seconds, and about 40% of requests failed. Even with Watt’s optimizations, Next.js lagged behind the lighter frameworks.

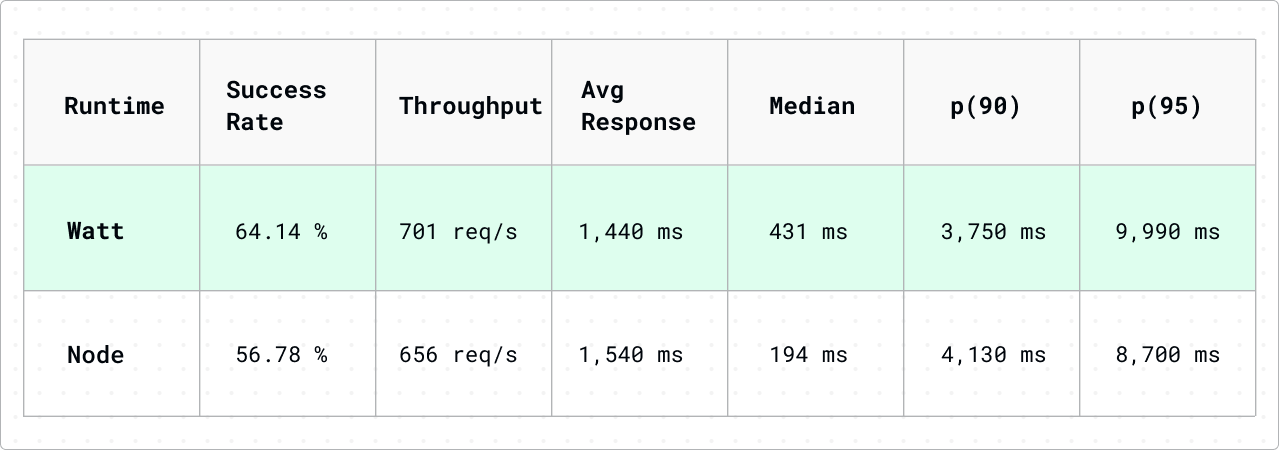

We re-ran the benchmarks after upgrading to the latest Next.js canary and Watt 3.39.0 to see if the situation had improved:

Next.js Version Improvement (Watt runtime)

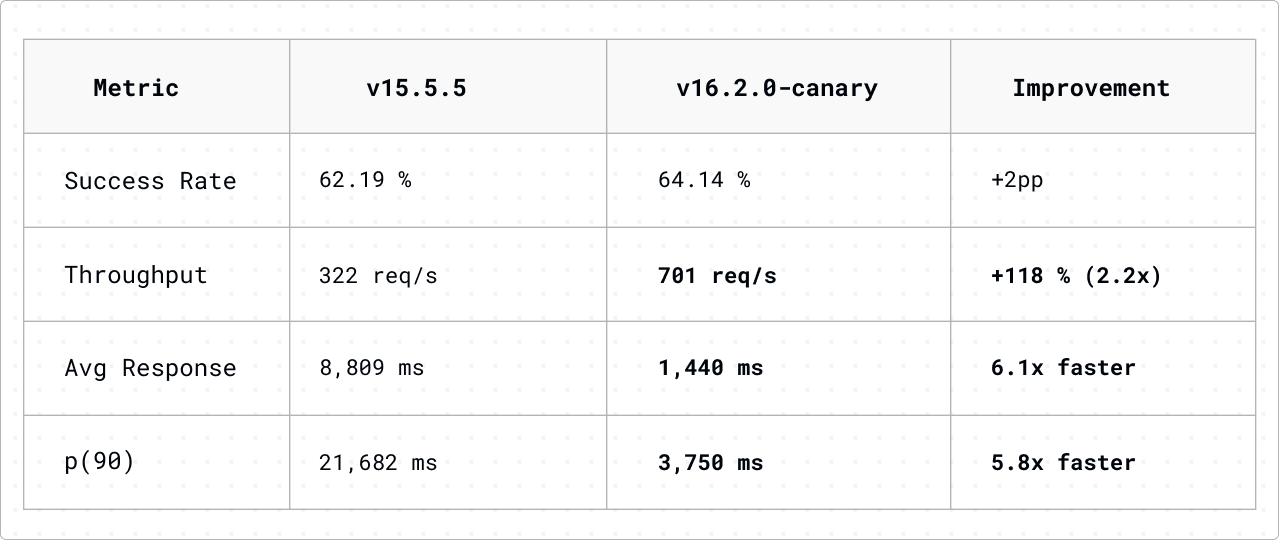

Upgrading from Next.js 15.5.5 to 16.2.0-canary.66, along with Watt 3.39.0, brought a big improvement:

-

Throughput more than doubled

-

Average response times dropped by over six times

-

We saw an 83% reduction in latency.

The success rate only improved a little (about 36% of requests still failed), but the successful requests were served much faster, with the median response time dropping from seconds to 431ms.

This is real progress. Next.js is still the slowest of the three frameworks at this load, but the gap is closing, and more improvements are on the way.

Framework Collaborations: Benchmarks as a Catalyst

One of the best parts of this project was working directly with the framework teams. Sharing real-world benchmark data, especially flamegraphs that show where time is spent, helped turn abstract performance talks into real fixes. (If you are on a web performance team, we’d love to talk.)

The Next.js Collaboration: Fixing RSC Deserialization

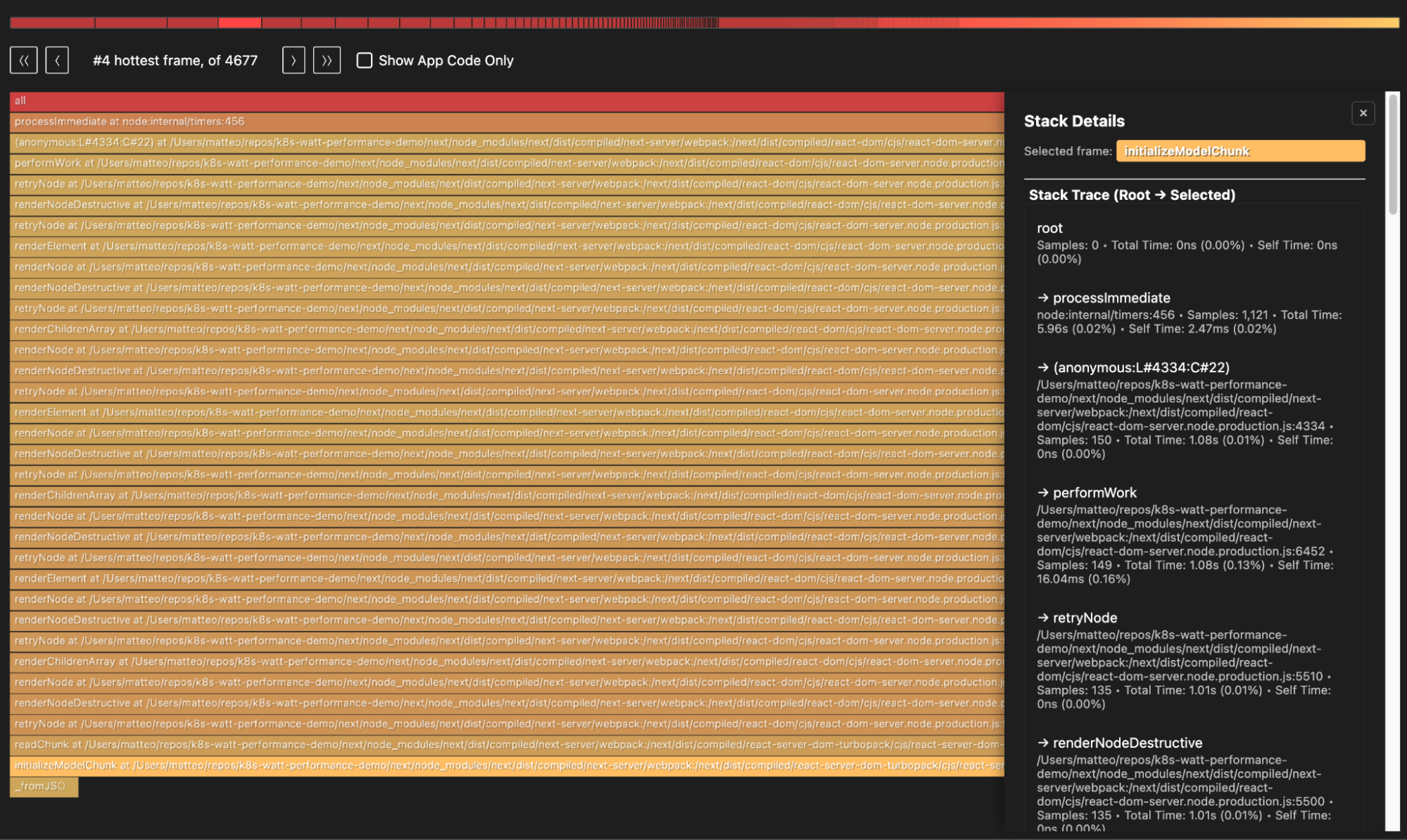

After our initial Next.js benchmarks showed multi-second response times, we shared flamegraphs from our load tests withTim Neutkens from the Next.js team. The flamegraphs revealed a clear hotspot: initializeModelChunk. This function calls JSON.parse with a reviver callback in React Server Components (RSC) chunk deserialization.

The root cause was a well-known V8 performance characteristic: JSON.parse is implemented in C++, and passing a reviver callback forces a C++ → JavaScript boundary crossing for every key-value pair in the parsed JSON. Even a trivial no-op reviver (k, v) => v makes JSON.parse roughly 4x slower than bare JSON.parse without one. Since initializeModelChunk is called for every RSC chunk during SSR, this overhead compounds rapidly on pages with many server components.

Tim identified the fix and submitted it directly to React:facebook/react#35776 (merged Feb 19, 2026). The change replaces the reviver callback with a two-step approach—plain JSON.parse() followed by a recursive tree walk in pure JavaScript—yielding a ~75% speedup in RSC chunk deserialization:

This fix helps every React framework that uses Server Components, not just Next.js. It shows how profiling with real workloads can reveal optimization opportunities that microbenchmarks might miss.

The improvement is already reflected in our updated Next.js benchmarks (v16.2.0-canary.66), and we expect further gains as this optimization and others land in stable releases.

The TanStack Turnaround: A Case Study in Rapid Optimization

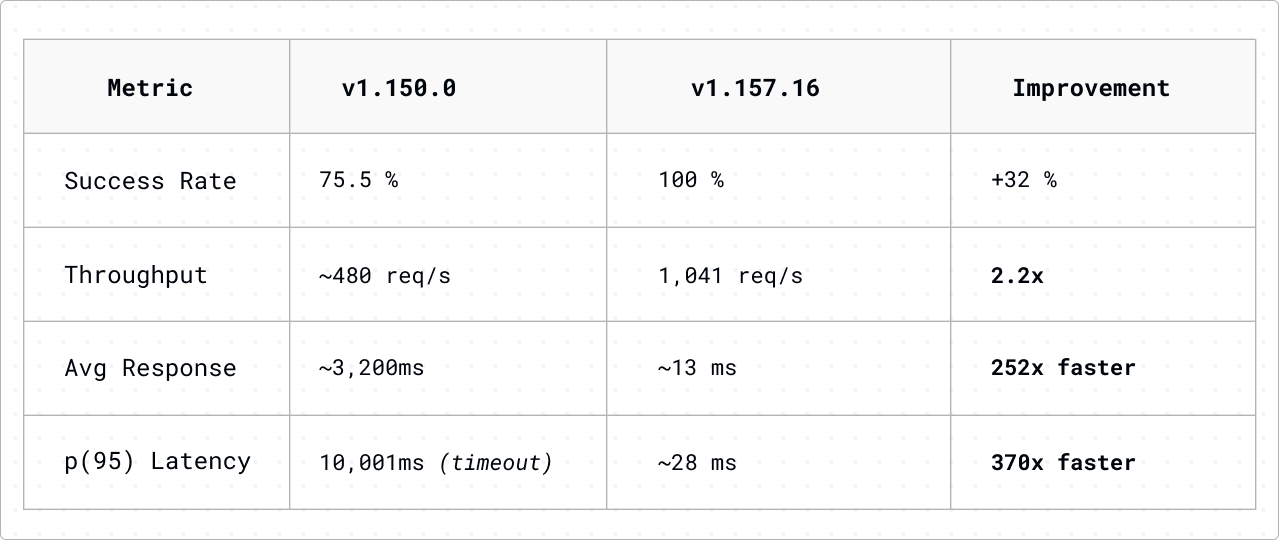

Interestingly enough, we had a similar journey with the TanStack team. Our initial benchmarks used TanStack Start v1.150.0, and the results were concerning: requests timing out, 75% success rates, and average response times exceeding 3 seconds. We shared these findings with the TanStack team, who quickly identified the critical bottlenecks (also via @platformatic/flame) in their SSR request handling pipeline.

Within 7 minor versions, they shipped a fix. We re-ran the benchmarks on v1.157.16, and the transformation was extraordinary:

The v1.150 numbers tell the story of a framework under distress. The p(95) latency hitting exactly 10,001ms wasn’t a coincidence, as the requests were slamming into our 10-second timeout limit. One in four requests failed entirely.

At 1,000 req/s, the framework was drowning.

After the fix, TanStack Start became the fastest framework in our benchmark. Response times dropped from seconds to milliseconds,the timeout cliff vanished, and every single request succeeded.

What makes this improvement even more notable is that it was runtime-agnostic. Both Watt and Node.js saw virtually identical gains: Watt improved from 3,228ms to 12.79ms average response time, while Node.js improved from 3,171ms to 13.73ms. This confirms that the bottleneck was purely in the framework’s code and that the fix benefited all users equally, regardless of their deployment strategy.

Runtime Comparison: Watt vs Node.js

Watt’s SO_REUSEPORT Advantage

Watt uses Linux kernel’s SO_REUSEPORT to let workers accept connections directly:

-

Kernel distributes the connection to the worker.

-

The worker processes the request.

No master coordination, no IPC overhead. The kernel handles load distribution efficiently.

When Does Watt Help Most?

Framework Rankings

With Watt Runtime

With Node.js Runtime

Reproducing These Benchmarks

The complete benchmark infrastructure is available at:

https://github.com/platformatic/k8s-watt-performance-demo/tree/ecommerce

To run the benchmarks:

# Benchmark TanStack Start

AWS_PROFILE=<profile-name> FRAMEWORK=tanstack ./benchmark.sh

# Benchmark React Router

AWS_PROFILE=<profile-name> FRAMEWORK=react-router ./benchmark.sh

# Benchmark Next.js

AWS_PROFILE=<profile-name> FRAMEWORK=next ./benchmark.sh

# Benchmark all frameworks

AWS_PROFILE=<profile-name> ./benchmark-all.sh

The script creates an ephemeral EKS cluster, deploys all three runtime configurations (Node, PM2, Watt), executes the load tests, and tears down the infrastructure automatically. The results for PM2 were omitted from the blog post because they align with previously reported findings (read 93% Faster Next.js in (your) Kubernetes).

The script creates an ephemeral EKS cluster, deploys all three runtime configurations (Node, PM2, Watt), executes the load tests, and tears down the infrastructure automatically. The results for PM2 were omitted from the blog post because they align with previously reported findings (read93% Faster Next.js in (your) Kubernetes).

Key Takeaways

-

Watt Provides Consistent Improvements

Watt improved performance for all frameworks compared to standalone Node.js. The gains ranged from 7% for TanStack to 38% for React Router. It’s a low-risk optimization that helps in every case. -

TanStack Start is Production-Ready

Despite being the newest framework, TanStack Start delivered the best performance. The team’s rapid response to performance issues (a 252x improvement across 7 versions) demonstrates an active focus on development and optimization. -

Keep Dependencies Updated

The results from TanStack and Next.js both show how important it is to keep your dependencies up to date. TanStack improved from 75% to 100% success in 7 versions. Next.js doubled its throughput between v15 and v16 canary. You only get these performance improvements if you update. -

Framework Choice Matters More Than Runtime

The difference between TanStack Start and Next.js (3x throughput, 690x latency difference) far exceeds the difference between Watt and Node.js on the same framework. Choose your framework wisely. -

Next.js Needs Caching

At 1,000 req/s, Next.js struggled. For high-volume SSR workloads, users should consider adopting aggressive cache strategies (ISR, edge caching, component caching). Next.js has great primitives for these, and you can use them in Watt. We did not implement any caching solution for Next.js because, in most e-commerce (or enterprise) scenarios, caching is a no-go: companies want to implement aggressive personalization strategies and A/B testing, running thousands of experiments in parallel. That said, the jump from v15 to v16 Canary shows meaningful improvement, and if this trajectory continues, the gap will keep closing.

If you want performance to be a key part of your technology choices, try setting clear latency budgets for each route before you start building or picking a framework. Setting concrete performance goals early helps guide decisions about architecture and tools, and makes sure your stack meets real-world needs. Planning for latency by route can also show when caching, framework choice, or runtime tweaks will have the biggest impact on user experience.

Conclusion

These benchmarks show there are big performance differences between SSR frameworks when running the same app under load:

-

TanStack Start emerged as the performance leader, handling 1,000 req/s with 13ms average latency.

-

React Router delivered reliable performance with zero failures.

-

Next.js struggled at this load, but improved a lot after upgrading to v16 canary. Throughput doubled and latency dropped by six times.

Beyond the numbers, this project showed that you can’t fix what you can’t see. We use platformatic/flame for our own internal performance testing, and sharing benchmark data with framework teams led to real improvements. The TanStack team’s 252x improvement in 7 versions, and the Next.js team’s work that led to a 75% speedup in React’s RSC deserialization, both show that open performance data helps the whole ecosystem, not just one framework or project.

For teams choosing an SSR framework, these results suggest:

-

High-throughput requirements: Consider TanStack Start or React Router

-

If you have an existing Next.js project, upgrade to the latest version for major performance gains. Use Watt to get the best throughput.

-

Runtime optimization: Watt provides consistent improvements across all frameworks

We’re actively looking to speak with web performance teams at the moment. If that’s you, please send me a DM on LinkedIn, Twitter, hello@platformatic.dev.