Figma's next-generation data caching platform

Figma's next-generation data caching platform

In recent years, Figma's growth has tested the scalability limits of our storage infrastructure. In 2024, we rearchitected our durable metadata storage systems

How Figma’s databases team lived to tell the scale

Our nine month journey to horizontally shard Figma’s Postgres stack, and the key to unlocking (nearly) infinite scalability.

; in 2025, we expanded our ambitions to include our ephemeral storage and caching layer, built on top of Redis.

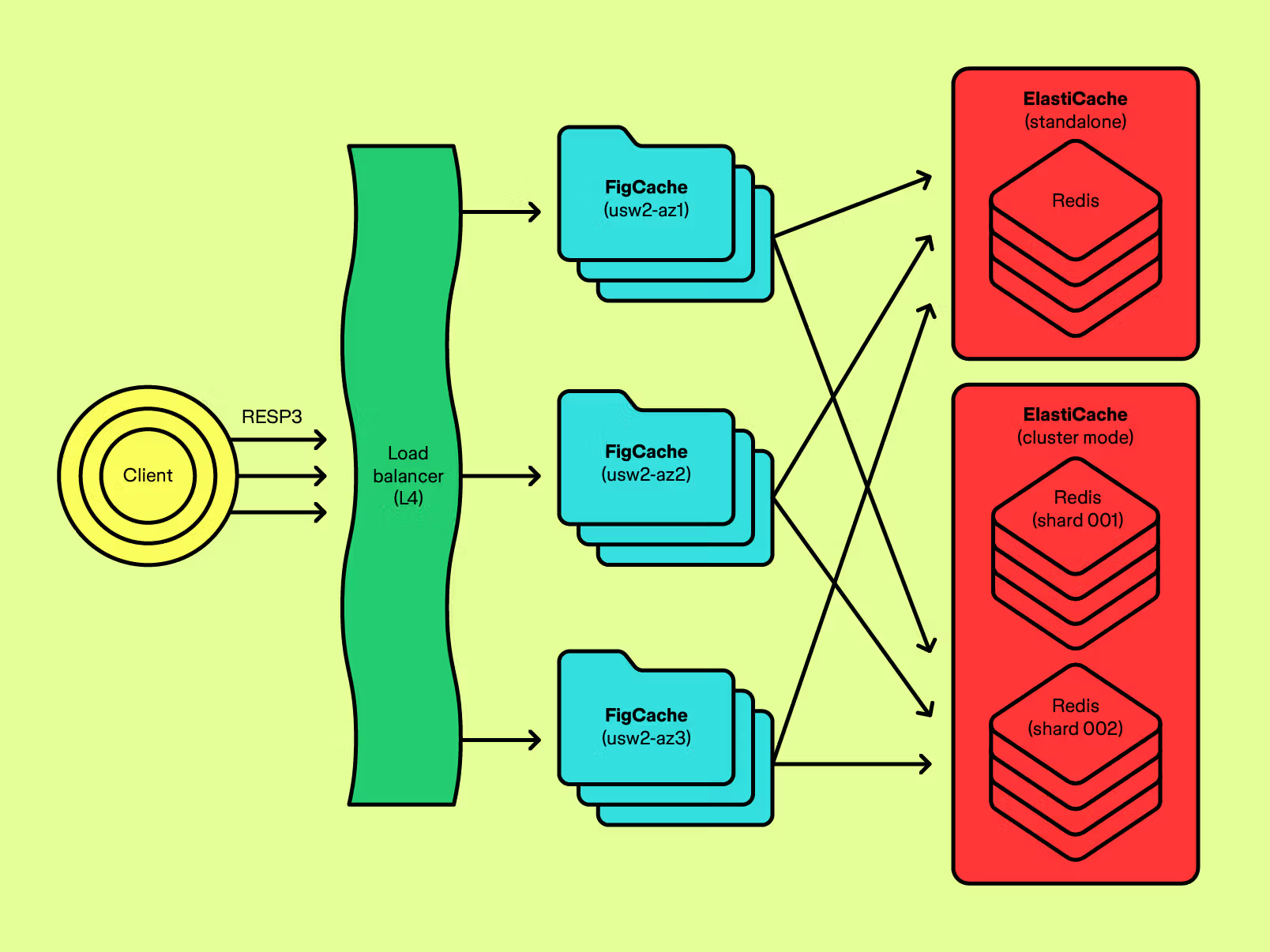

We built FigCache: a stateless, RESP-wire-protocol proxy service that acts as a unified Redis data plane, complemented by a suite of first-party client libraries. FigCache decouples connection scalability on Redis from fleet capacity volatility in client services, centralizes traffic routing, uplevels our security posture, and delivers comprehensive, end-to-end observability across Figma's entire caching stack. Since its rollout for Figma's main API service in the second half of 2025, Figma's caching layer has achieved six nines of uptime—a new reliability milestone.

Growing pains in caching

As our infrastructure footprint grew, Redis evolved from a simple, non-critical component into a critical-path dependency for site availability.

Before our rearchitecture, we had to contend with evolving structural challenges around operating a massive system at scale. Redis clusters faced growing connection volumes, slowly approaching hard limits. Rapid scale-ups of client services could trigger thundering herds of connection establishment that bottlenecked I/O and degraded availability.

Other operational challenges emerged as well. Without centralized traffic management and consistent access paradigms, applications could pollute or corrupt data across clusters. Observability features across disparate client libraries were inconsistent, making it difficult to diagnose and mitigate incidents quickly. A fragmented client ecosystem also prohibited our ability to make fleet-wide guarantees about client-side state correctness during failovers or topology changes.

Initially, we sought to remove Redis dependencies from the API subsystems supporting Figma's core functionalities. We also built localized, service-specific solutions, including a custom client-side connection pooling layer that amortized the cost of connecting to overloaded Redis clusters. While both initiatives were effective in isolating Redis outages from top-level site availability, we wanted to pursue a more strategic, longer term solution.

In response to these growing pains and historical incidents

Postmortem: Service disruptions on June 6 & 7 2022

The root cause of our recent service outage and how we resolved it.

, we sought to deliver a foundational, step-function evolution in our platform offering. We took this opportunity to rearchitect the caching stack from the ground up, and deliver a next-generation platform for internal customers.

Designing for longevity

We sought to remedy all existing core deficiencies while operating on a multi-year time horizon to support the next phase of Figma's business growth. This materialized as a concrete set of design objectives:

- Isolate Redis from client connection volatility. The connection volume served by Redis should be decoupled from the size and elasticity of client applications, and Redis should be isolated from thundering herds of new connections when client capacity scales up rapidly.

- Supply batteries-included observability features. The platform should supply consistent, multi-layered, and granular observability features so that both service owners and platform operators can monitor the availability and performance of individual workloads in an inherently multitenant environment.

Redis Cluster is a Redis deployment mode that shards data across multiple nodes, enabling arbitrary horizontal scalability.

- Expose transparent and elastic horizontal scalability behind a simplified API. Capacity elasticity of the underlying caching infrastructure should be transparent to clients. The complexity of correctly handling cluster topology changes—including scale-outs, scale-ins, node failovers, and total shard losses—should be managed at a layer below thick client libraries. For Redis, this meant abstracting away the mechanics of the Redis Cluster protocol.

- Abstract multiple backends and clusters behind a universal endpoint. Figma's Redis footprint spans many clusters with varying degrees of isolation requirements, durability expectations, criticality characteristics, and traffic volumes. Traffic routing between applications and clusters should be mediated by a centralized source of truth, disambiguating cluster partitioning decisions among applications and removing application-level complexity in configuring multiple independent endpoints and clients.

- Support seamless pluggability of alternative backend storage systems. The platform should accommodate changes in feature requirements from new workloads with minimal modifications to client applications. For example, the system should have the flexibility to provide opt-in true durability backed by an alternative storage technology, exposed behind the same protocol and API.

- Be extensible by default. The system should abstract away custom, Figma-specific data plane logic across all applications, like inline data encryption, guardrail enforcement, traffic backpressuring, and more.

An opportunity for new foundational technologies

In search of solutions, we determined a need for two key pieces of infrastructure.

The first was a caching proxy service, acting as a unified Redis data plane and ingress layer. This would offer applications a consistent, language-agnostic interface for accessing Redis, and hide the complexity of traffic routing and cluster management. It would act as a connection multiplexer, isolating connection scalability on Redis clusters from connections created by Redis clients. Additionally, it would implement a standardized, comprehensive observability system to provide consistent monitoring on all Redis traffic, for any connecting application.

The second was first-party, cross-language, battle-tested client libraries. These libraries would provide a Figma-wide baseline for supported client features, enable universally consistent and language-agnostic client-level observability, and guarantee use of vetted configuration parameters. To be minimally invasive to existing applications, we opted to build wrappers over existing, established open source Redis client libraries that were already in use in the codebase; this allowed us to forgo significant additional complexity in creating a proprietary protocol or adopting and migrating to a new client technology altogether.

We landed on a three-phase delivery strategy:

- Develop opinionated, first-party clients in core Figma server-side languages (Go, Ruby, and Typescript), and migrate all services to use these clients, without otherwise changing the endpoint(s) they connect to.

- Develop and productionize an internal Redis proxy service, and tackle all scalability, reliability, operability, and observability challenges at that layer.

- Facilitate a gradual but reversible migration of applications to the proxy service, with a carefully planned rollout sequence that trades off site availability risk and potential for reliability wins.

Why we built instead of bought

In many ways, Figma faced classic infrastructure problems, for which solutions exist in open source. We carefully evaluated build-versus-buy tradeoffs, and ultimately decided to build a proxy system in-house.

Existing solutions shipped with rudimentary RPC servers that were not capable of extracting full, annotated arguments from arbitrary inbound Redis commands. This limited our ability to build generic yet comprehensive runtime guardrails that operated on the rich semantics of commands.

Redlock is the canonical algorithm for a distributed lock implementation over multiple, independent Redis clusters.

Similarly, this prevented us from defining and implementing custom commands that could be intercepted and executed by the proxy itself. We needed the flexibility to augment the Redis protocol to expose capabilities that would otherwise be duplicated among clients. This included, among other extensions, a language-agnostic, multi-cluster distributed locking abstraction over Redlock, and a protocol-native graceful connection draining mechanism to accommodate rapid continuous deployments.

Beyond protocol extensibility, we also had to contend with the realities of our fragmented client ecosystem. Existing applications connected to Redis with various permutations of Redis Cluster-awareness, TLS in-transit encryption, and other connection parameters. A proprietary proxy layer allowed us to build several shims in the RPC layer that transparently handled all these quirks—like a Redis Cluster mode emulation layer that exposed the proxy to cluster-aware clients as a fake cluster—making migrations significantly easier and less risky.

Finally, extending existing open source Redis proxies with custom business logic proved heavyweight and logistically brittle, requiring maintenance of a source code fork that would be difficult to keep in sync with upstream. We designed our proxy to be internally composable, making it straightforward to extend command processing and execution with proprietary logic. This enabled forward-looking optionality to build features like priority-aware traffic load control (QoS-based backpressuring), inline data encryption and compression, multi-upstream traffic mirroring, highly customizable command usage restrictions, and more.

FigCache, our in-house caching service and client ecosystem

RESP refers to the Redis Serialization Protocol, a specification of the text-encoded wire format used for transacting structured data between Redis clients and servers.

To address our design objectives, we built FigCache: a stateless, RESP-wire-protocol proxy service backed by a fleet of Redis clusters on AWS ElastiCache, complemented by a suite of rich, first-party client libraries.

FigCache is a stateless, highly available, and horizontally scalable service that proxies connections to ElastiCache Redis clusters.

Decoupled proxy architecture

We architected FigCache to be maximally flexible for plug-and-play extensibility. Key to this objective was out-of-the-box support for:

- Alternative frontends, to expose FigCache capabilities to services without necessarily requiring a RESP or Redis client.

- Alternative storage backends, to support durable Redis alternatives like AWS MemoryDB, or [Figma's in-house Postgres stack

How Figma’s databases team lived to tell the scale

Our nine month journey to horizontally shard Figma’s Postgres stack, and the key to unlocking (nearly) infinite scalability. ](https://www.figma.com/blog/how-figmas-databases-team-lived-to-tell-the-scale/). - Composable inline request and response manipulation engines, to support capabilities like read/write connection splitting, key pattern-based routing, internally parallelized scatter-gather execution, and more.

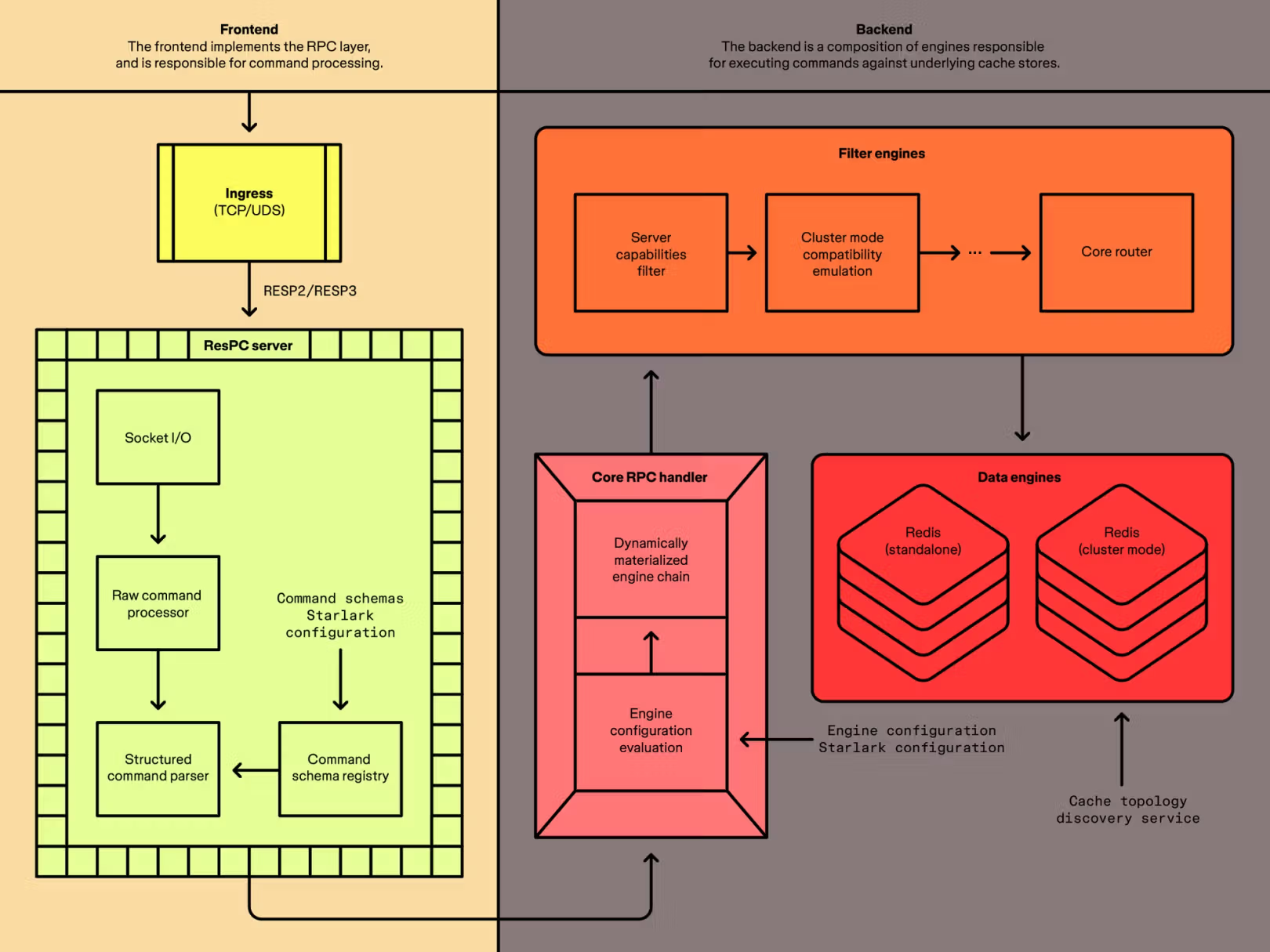

FigCache is internally partitioned into independent RPC and execution layers.

FigCache is built on a decoupled internal architecture that separates frontends and backends. The frontend layer encapsulates all client interaction, which includes the RESP-based RPC system, network I/O responsibilities, connection management, and protocol-aware structured command parsing. The backend layer encapsulates command processing and manipulation, connection multiplexing to storage backends, and physical command execution.

A native, drop-in replacement

We designed FigCache to be a drop-in Redis replacement for applications, transparently handling responsibilities of connection pooling, traffic routing, and observability. In the simplest case, migrating an application to FigCache was as trivial as a one-line endpoint configuration change.

We also sought to push the complexity of connection management to FigCache, and isolate from Redis the cost of client-side connection establishment. This required carefully designing the RPC layer to make connections as cheap as possible, and building a connection pooling layer that multiplexes commands from orders of magnitude more client connections to a finite volume of outbound connections to Redis.

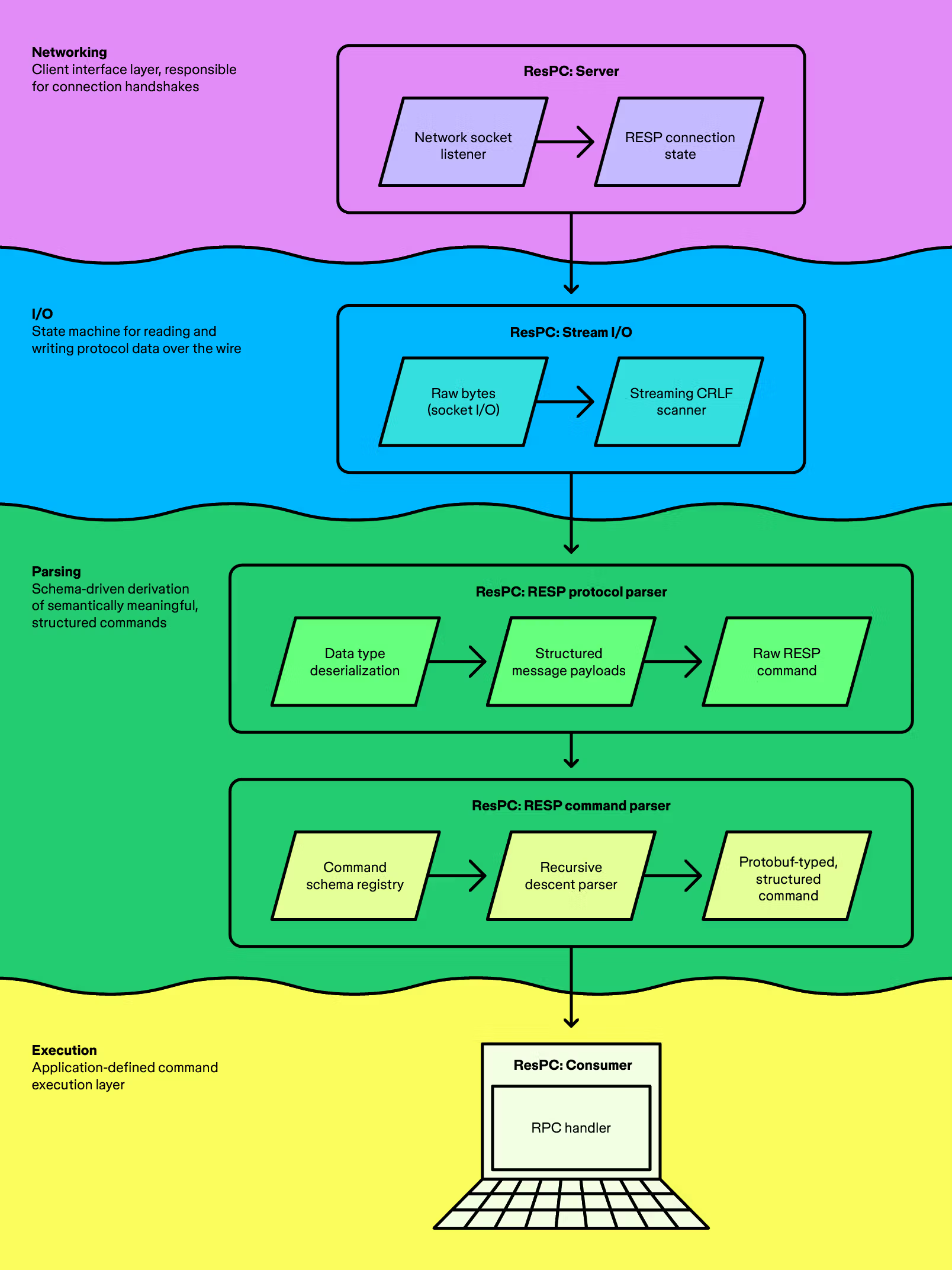

To meet these objectives, we developed ResPC (a portmanteau of RESP and RPC), a Go library providing an RPC framework for building servers over RESP. ResPC is the entry point into FigCache's core command processing and execution engine.

ResPC derives structured, semantically rich RESP commands from a stream of raw bytes issued by Redis clients.

The ResPC framework is split into several independent components:

- Server layer: Responsible for accepting connections from clients, managing in-memory client connection state, and efficient network I/O.

- Streaming RESP protocol parser: Handles incremental parsing and serialization of RESP messages over the wire.

- Schema-driven structured command parser: Derives semantically meaningful parameters from RESP commands, driven by a schema registry that declaratively expresses supported command sequences with annotated arguments.

- Command dispatch layer: Performs implementation-agnostic command processing and execution. In FigCache, this is the layer that implements the core proxy logic, dispatching commands for execution against various upstream clusters.

Configuration-driven, dynamic engines

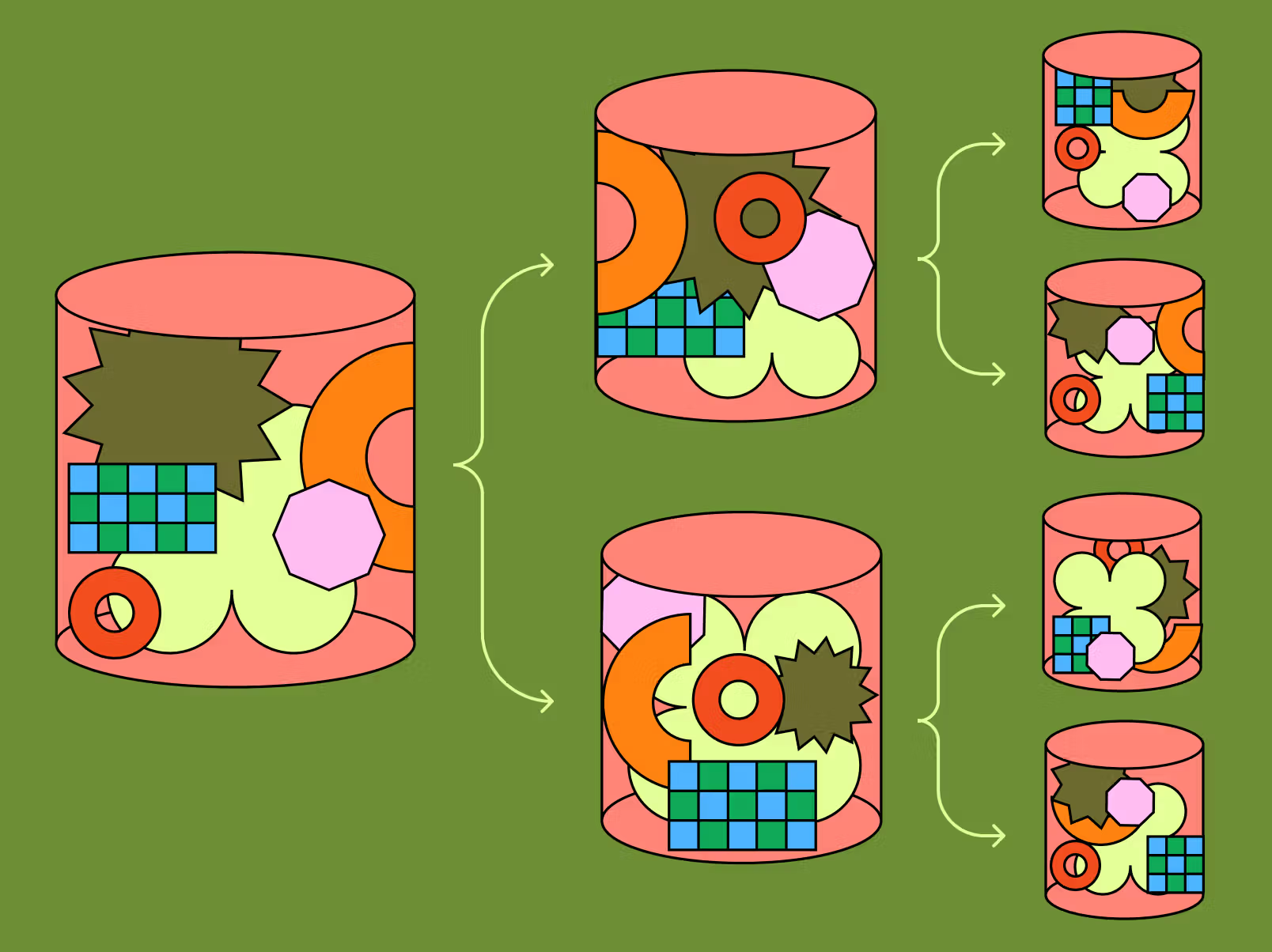

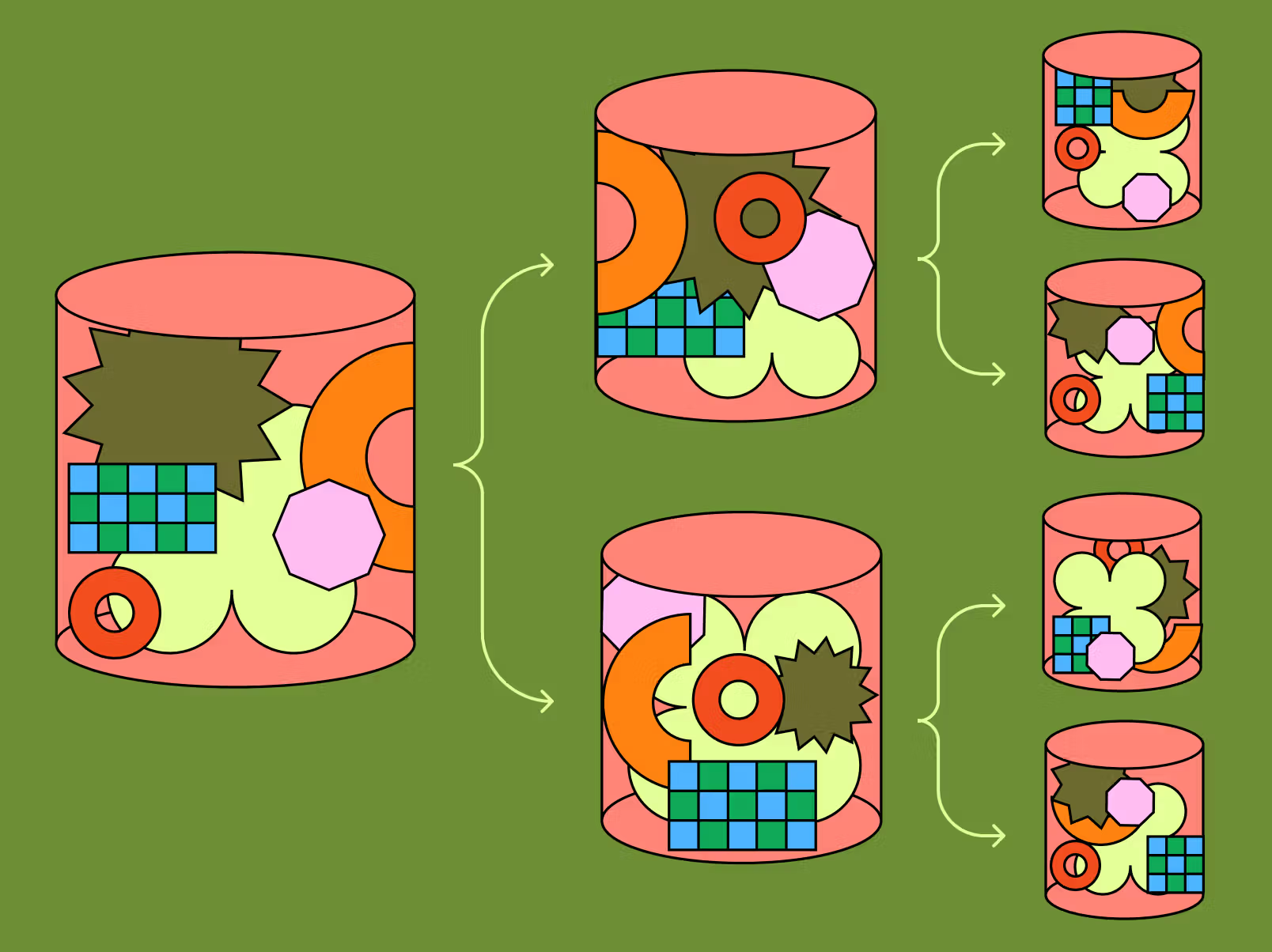

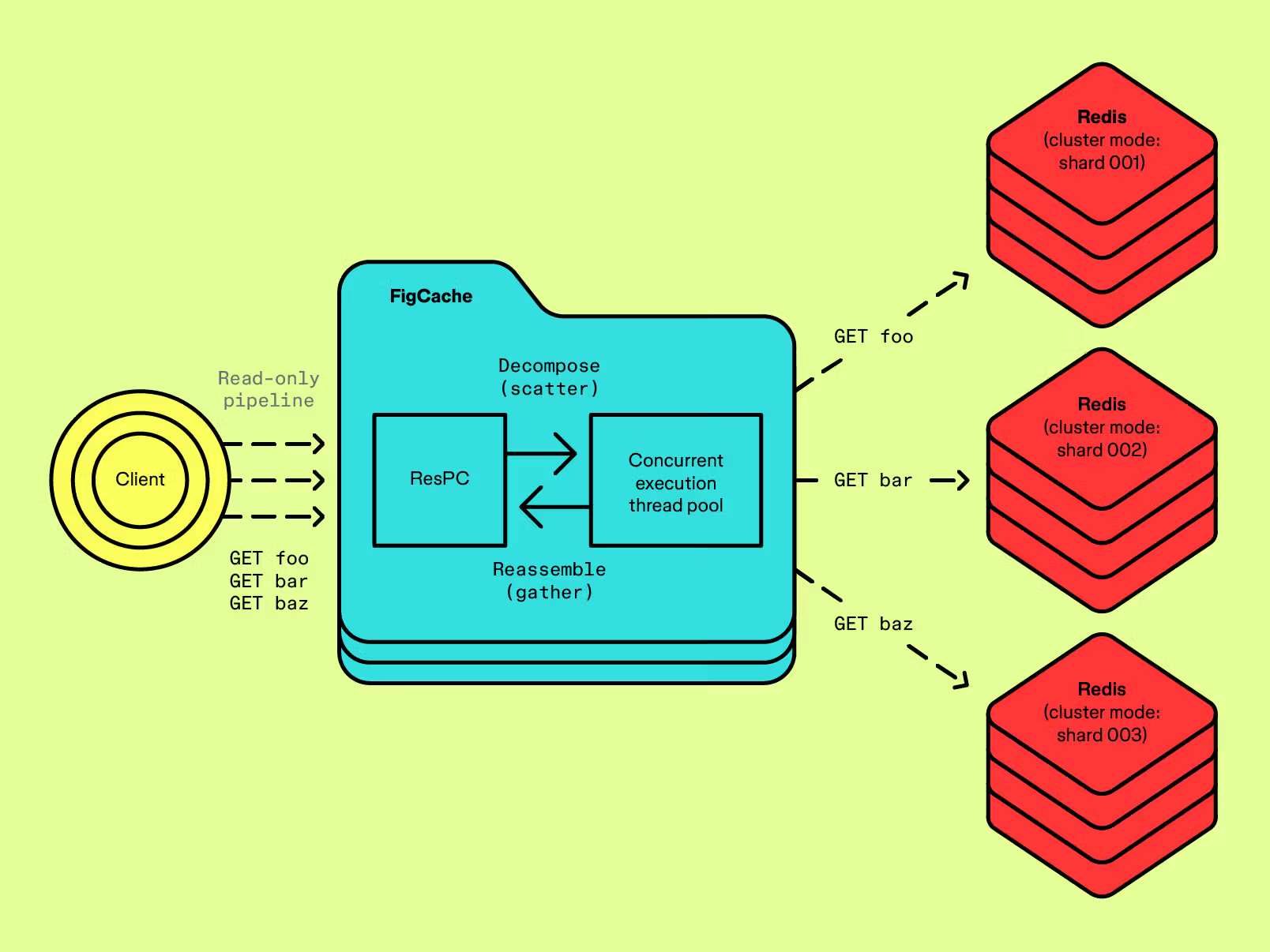

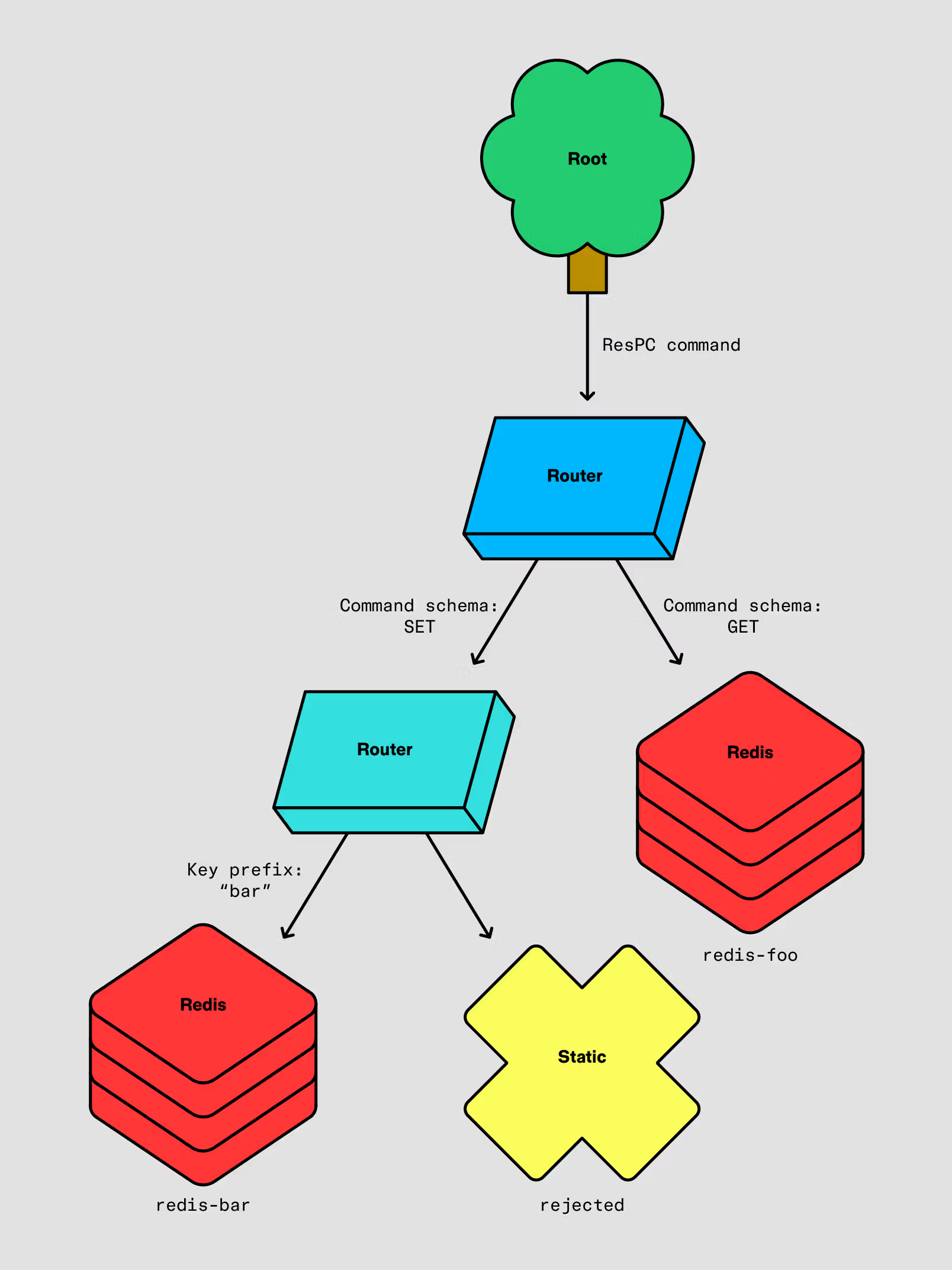

FigCache's backend layer is modeled as a dynamically-assembled tree of engine nodes, each of which is a discrete unit of logic that accepts a structured ResPC command as input and produces a similarly structured ResPC reply. Leaf-level nodes are "data engines" responsible for executing commands against Redis; intermediate-level nodes are "filter engines" that route, block, or modify commands inline before passing execution to child engines. Conceptually, processing a command in FigCache is equivalent to executing this directed graph over the command, starting from the root.

Redis in cluster mode returns CROSSSLOT errors for multi-key operations, like pipelines and transactions, that span different hash slots. This implies that the operation may be cross-shard, and therefore is not guaranteed to be executed atomically (for transactions) or over a single physical connection (for pipelines).

For example, the fanout filter engine intercepts eligible multi-shard pipelines and internally executes them as a parallelized scatter-gather, dispatching many individual commands and aggregating the responses. This allows FigCache to transparently resolve read-only batch operations that would normally have surfaced to clients as a CROSSSLOT violation.

The fanout execution engine internally resolves certain cross-shard, read-only pipelines as parallelized scatter-gathers.

The entire engine tree is expressed in configuration, and assembled at runtime during server initialization. To accommodate the expression of complex, nested engine nodes, we developed a custom configuration system that models engine configuration as a Starlark program, dynamically evaluated at runtime in a virtual machine, which renders a Protobuf-structured configuration definition consumed by FigCache's backend.

This enables operators to express complex runtime behaviors exclusively in configuration, without requiring heavyweight changes to core business logic in the server or deployments of updated server binaries. For example, command-type splitting, key-prefix–based routing and rejection, and proxying to distinct Redis clusters can be modeled as a composition of a few primitive engine building blocks. This is illustrated below in the configuration program and accompanying engine tree visualization.

Starlark configuration programs materialize a command execution graph that can be modeled as a tree, whose nodes are individual engines.

def main():

"""

This configuration program expresses hierarchical evaluation

of keys to conditionally serve or reject requests in two Redis

clusters, foo and bar.

It is modeled by composing two primitives--a Router, which

splits execution among multiple child engines based on a match

of the command schema or key pattern, and a Redis, which

executes the command against a Redis cluster.

GET commands are unconditionally served by redis-foo.

SET commands are served by redis-bar, but only if its key

starts with \`bar:\`; other keys are rejected with a static

error message.

"""

redis_foo = enginepb.Redis(...)

redis_bar = enginepb.Redis(...)

bar_router = enginepb.Router(

rules = [

enginepb.Rule(

prefix = enginepb.Rule.Prefix(prefix = "bar:"),

engine = redis_bar,

),

enginepb.Rule( # passthrough

engine = enginepb.Static(

reply = respcpb.Reply(message = "rejected"),

),

),

],

)

cmd_router = enginepb.Router(

rules = [

enginepb.Rule(

command = respcpb.Schema(name = "GET"),

engine = redis_foo,

),

enginepb.Rule(

command = respcpb.Schema(name = "SET"),

engine = bar_router,

),

],

)

return cmd_router

The big migration

Our overarching migration design and strategy was directed by a number of important principles:

- Build correctness confidence early. FigCache should be rigorously exercised in application integration tests and in live environments with synthetic load generation.

- Minimize the code changes required of client applications. Adopting the new system should be as low-friction as possible. We incurred some upfront engineering cost to migrate all Figma applications to use first-party FigCache client wrappers; however, this was a much lighter lift since we maintained interface compatibility with existing open source clients.

- Provide granular switches to roll over traffic gradually. Services should be opted in to use FigCache on a case-by-case basis. Additionally, for large workloads, like Figma's main API service, traffic should be incrementally shifted across multiple, independent domains—an all-or-nothing approach was unacceptable.

- Ensure changes are reversible at runtime with appropriate feature flags. In an emergency, we need the ability to quickly revert live traffic, without requiring code changes or binary deployments.

Beyond these goals, we knew we also needed to derisk the potential impact of performance regressions. Introducing a proxy tier necessarily adds latency due to additional critical-path network hops and layers of I/O. We knew this could be a show-stopping blocker, and came up with a few strategies to understand and optimize performance.

First, we ran extensive performance evaluations with a suite of open source and internal Redis benchmark tools. Notably, this included installation of a distributed stress test that runs weekly, on production, surging throughput to an order of magnitude higher than Figma's typical organic peak. This served as an automated, ongoing validation of our system's end-to-end production capacity under excessive load.

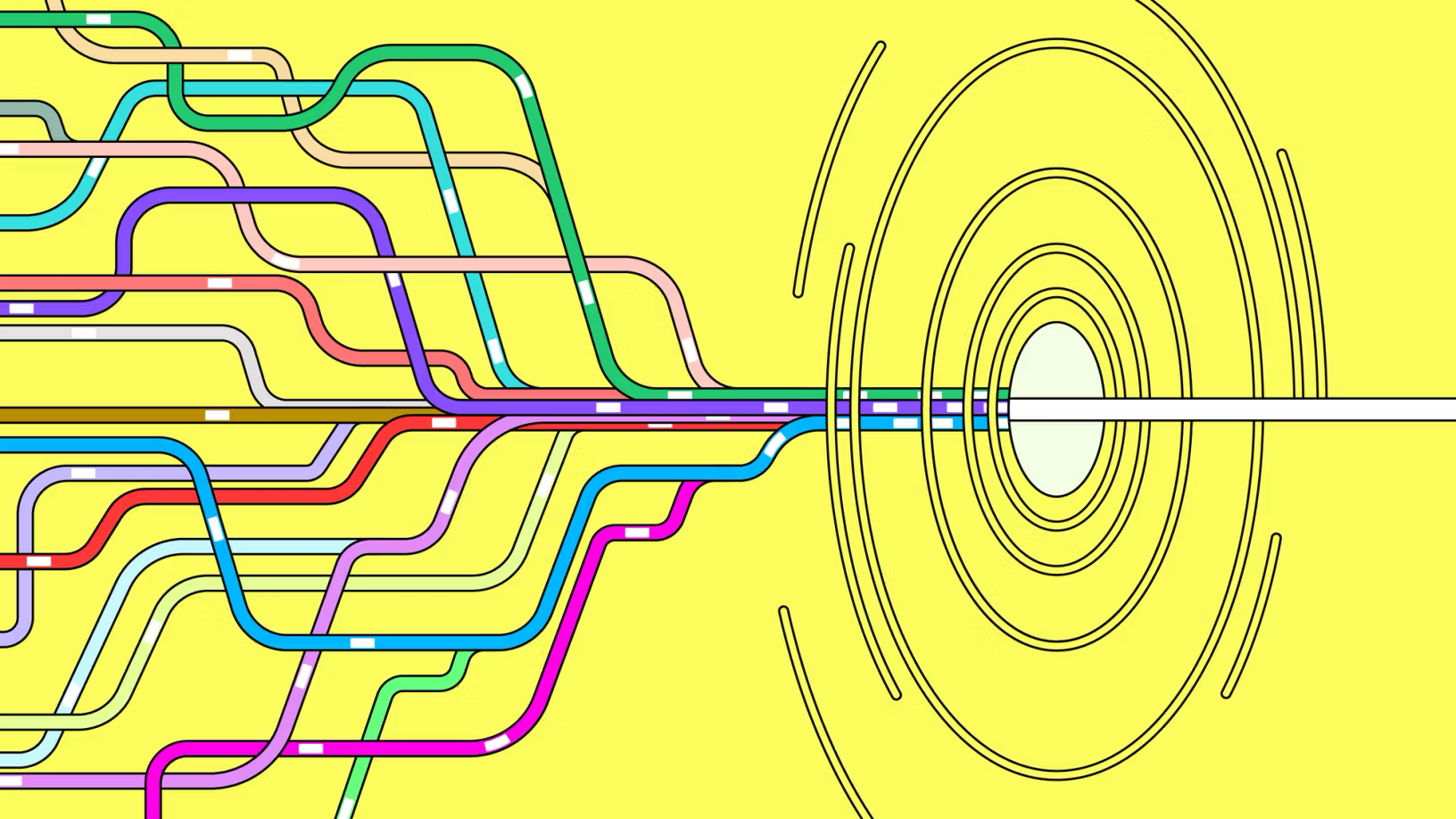

To control latency effects through the network path, we deployed routing-level configuration to probabilistically prefer zonal traffic colocation across client services, FigCache load balancers, and FigCache service instances themselves. The latency penalty of a network hop between AWS availability zones can be as much as a few milliseconds—this optimization makes this penalty much more stable and predictable.

We also built development-time performance assessment tools to support a real-time feedback loop for analyzing the potential performance penalties of code changes. On every pull request, our continuous integration system automatically produces CPU and memory profiles for tests of critical hot code paths, and hermetically exercises synthetic benchmarks against the server, ensuring there are no significant regressions against a golden performance baseline.

What we unlocked

In 2025, we delivered FigCache for multiple critical Figma services with no disruption to availability or functionality, which provided confidence it accomplished all of our core design objectives.

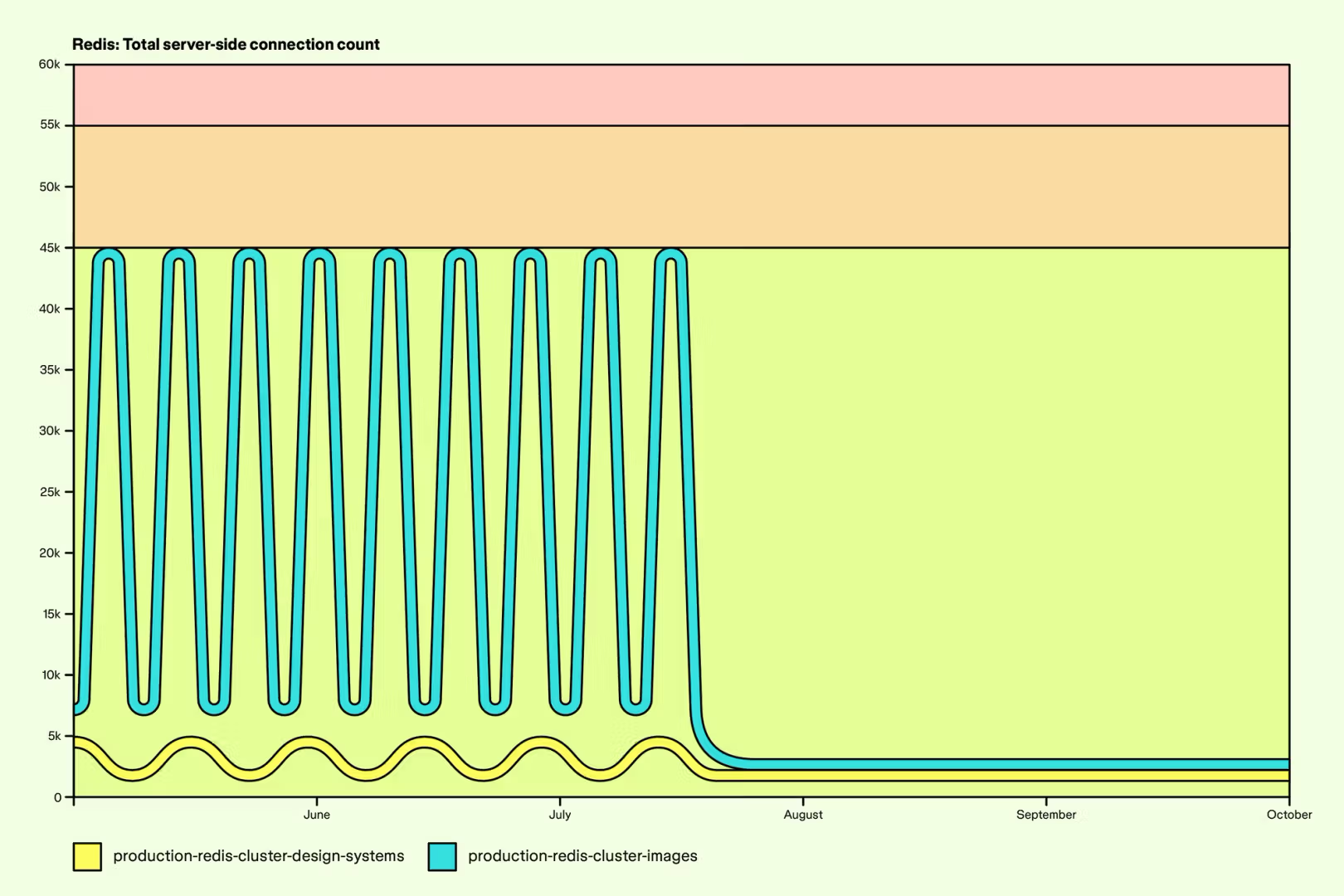

Infrastructure scalability

FigCache foundationally solves connection scalability challenges. This has in turn unlocked the ability to rapidly scale Figma's API fleet in response to user traffic, without adverse impact on the underlying infrastructure. As a connection pooling layer, FigCache has also alleviated absolute connection pressure on our most critical Redis clusters, derisking many years of continued capacity growth.

Following the rollout for 100% of Redis traffic originating from Figma's main API service, connection counts on Redis clusters dropped by an order of magnitude across the board, and became significantly less volatile despite an unchanged, diurnal site traffic pattern.

Step-function improvements to reliability

Connection pooling in FigCache eliminated an entire class of reliability risks around thundering herds of new connections from clients, a theme that materialized across many high-severity incidents. Additionally, standardizing on FigCache as a universal Redis access tier simplifies the platform guarantee of correctness in handling ElastiCache operational events. Node failovers, cluster scaling activities, and transient connectivity errors are now zero-downtime events.

Comprehensive observability

The entire caching stack is now instrumented end-to-end with metrics, logs, and traces. Out-of-the-box automatic measurement of the availability, throughput, latency, payload size, command cardinality, and connection distribution characteristics of all Redis traffic has reduced the time to diagnose incidents and performance regressions from hours or days to minutes.

Additionally, FigCache's routing-layer traffic classification engine now ascribes rich ownership metadata to all inbound Redis commands. This has allowed us to slice core operational metrics across hundreds of unique application workloads by tier, durability expectations, consistency requirements, and more.

More broadly, this work enabled us to formally define a caching platform SLO and precisely quantify the aggregate reliability profile of Redis at Figma.

Minimal-overhead infrastructure operability

Historically, operations like hardware rotations, cluster topology modifications, OS upgrades, and security updates required expensive cross-team coordination and, in rare cases, scheduling of site downtime. Pushing down this responsibility to FigCache has downgraded these events from high-severity incidents to routine, zero-downtime background operations. In particular, shard failovers now require zero operator intervention, and are executed liberally and frequently across our entire Redis footprint—partially to serve as a regular, production-environment live exercise of the system's built-in resiliency to Redis topology volatility.

We're hiring engineers! Learn more about life at Figma, and browse our open roles.

This work is the product of countless contributions by the Storage Products team in collaboration with many other engineers across Figma Infrastructure. It was made possible by Justin Palpant, Indy Prentice, Pratik Agarwal, and Yichao Zhao, with additional contributions by Devang Sampat, Mehant Baid, Alex Sosa, Ankita Shankar, Anna Saplitski, Can Berk Guder, Jim Myers, Lihao He, Manish Jain, and Ping-Min Lin.

Kevin is a software engineer on the Storage Products team, primarily supporting Figma's caching and ephemeral data platform.