Efficient pretraining with token superposition

Efficient pretraining with token superposition - Nous Research

Efficient pretraining with token superposition - Nous Research

2605.06546

2605.06546

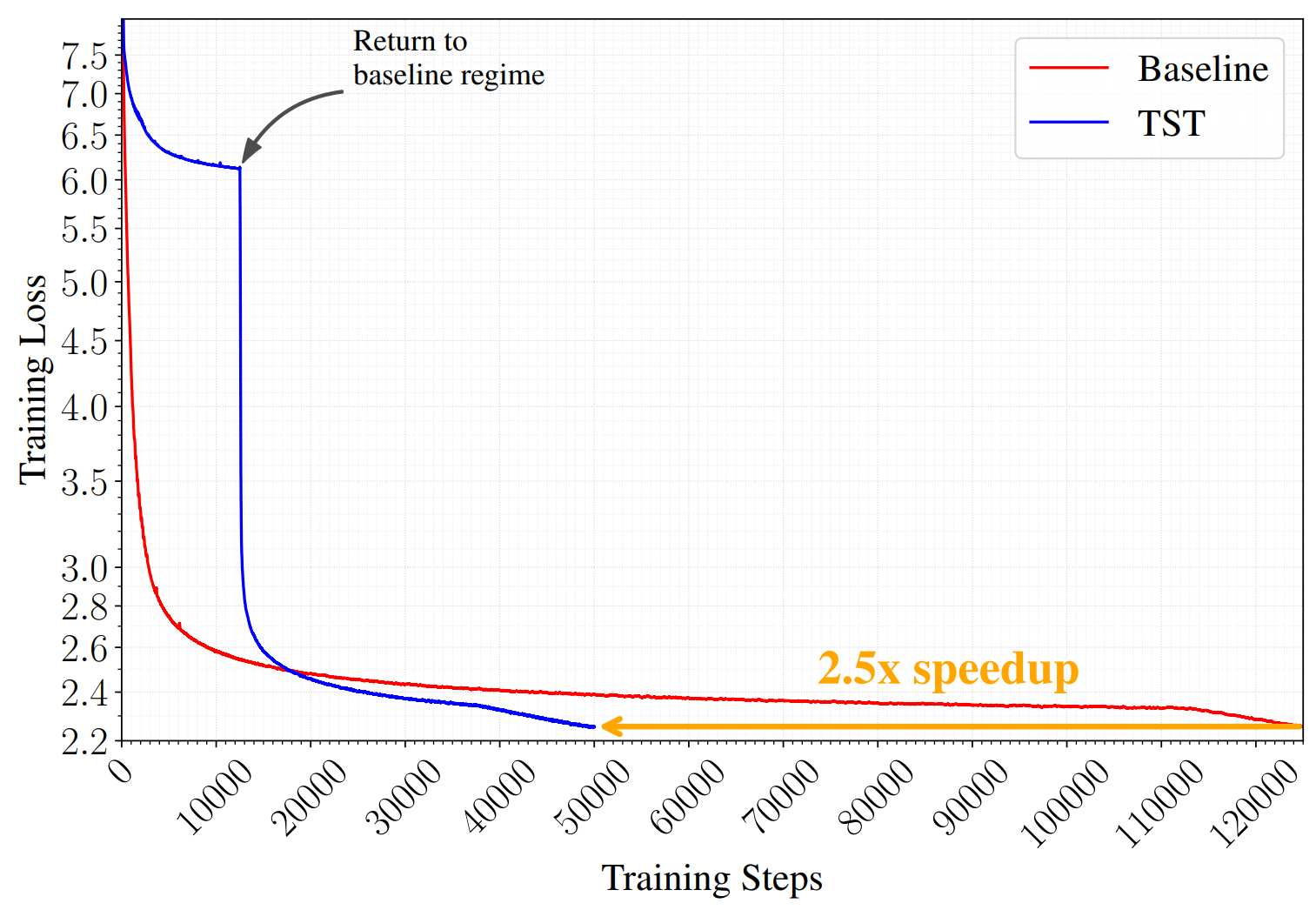

Figure 1. Loss curves during pretraining of two Qwen3-shaped 10B-A1B MoEs at matched FLOPs per step. The TST run consumes 2T tokens over its course; the baseline consumes 1.05T. The two runs are stopped at matched final loss, which is how we read speedups at iso-loss off the wall-clock axis.

Figure 1. Loss curves during pretraining of two Qwen3-shaped 10B-A1B MoEs at matched FLOPs per step. The TST run consumes 2T tokens over its course; the baseline consumes 1.05T. The two runs are stopped at matched final loss, which is how we read speedups at iso-loss off the wall-clock axis.

TL;DR. A 2–3× wall-clock speedup on LLM pretraining at matched FLOPs, without changing the final model architecture, optimizer, tokenizer, or training data. During the first 20–40% of training, the model reads and predicts bags of $s$ contiguous tokens — averaging their embeddings on the input side, predicting the next bag with a modified cross-entropy on the output side. For the remainder of the run, it trains normally on next-token prediction. The inference-time model is identical to one produced by conventional pretraining. Validated at 270M, 600M, and 3B dense, and at 10B-A1B MoE.

TL;DR. A 2–3× wall-clock speedup on LLM pretraining at matched FLOPs, without changing the final model architecture, optimizer, tokenizer, or training data. During the first 20–40% of training, the model reads and predicts bags of $s$ contiguous tokens — averaging their embeddings on the input side, predicting the next bag with a modified cross-entropy on the output side. For the remainder of the run, it trains normally on next-token prediction. The inference-time model is identical to one produced by conventional pretraining. Validated at 270M, 600M, and 3B dense, and at 10B-A1B MoE.

We introduce Token Superposition Training (TST), a modification to the standard LLM pretraining loop that produces substantial ...