LLMs 中的 Prompt caching,清晰解释

A case study on how Claude achieves 92% cache hit-rate

Claude 如何实现 92% 缓存命中率的案例研究

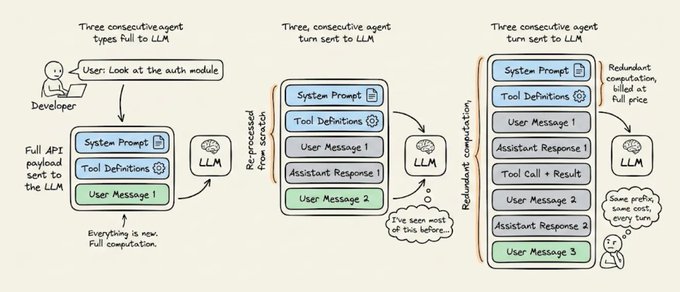

Every time an AI agent takes a step, it sends the entire conversation history back to the LLM.

每次 AI 代理采取一步时,它都会将整个对话历史发送回 LLM。

That includes the system instructions, the tool definitions, and the project context it already processed three turns ago. All of it gets re-read, re-processed, and re-billed on every single turn.

这包括系统指令、工具定义,以及它三轮前已经处理过的项目上下文。所有这些内容在每一轮都会被重新读取、重新处理和重新计费。

For long-running agentic workflows, this redundant computation is often the most expensive line item in your entire AI infrastructure.

对于长期运行的 agentic workflows,这种冗余计算往往是你整个 AI 基础设施中最昂贵的开支项目。

A system prompt with 20,000 tokens running over 50 turns means 1 million tokens of redundant computation billed at full price, producing zero new value. And that cost compounds across every user and every session.

一个包含 20,000 tokens 的 system prompt 在 50 轮对话中运行,意味着 100 万 tokens 的冗余计算以全价计费,却产生零新价值。而且这个成本在每个用户和每个会话中都会累积。

The fix is prompt caching. But to use it well, you need to understand what’s actually happening under the hood.

解决方案是 prompt caching。但是要好好使用它,你需要理解幕后实际发生了什么。

Static vs. Dynamic context

静态 vs. 动态上下文

Before you can optimize a prompt, you need to understand what changes and what doesn’t.

在优化 prompt 之前,你需要理解什么会变化,什么不会。

Every agent request has two fundamentally different parts:

每个 agent request 都有两个根本不同的部分:

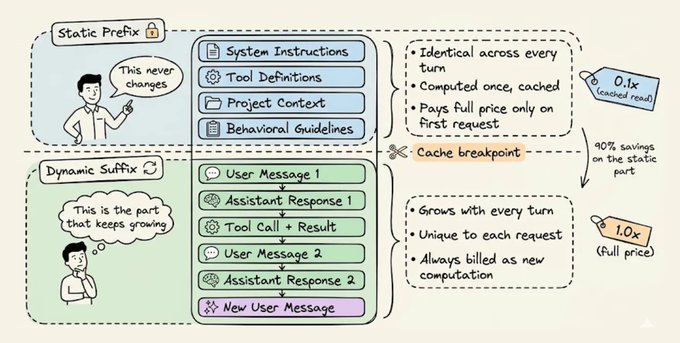

- The static prefix that stays identical across turns: system instructions, tool definitions, project context, and behavioral guidelines.

- 跨轮次保持相同的静态前缀:系统指令、工具定义、项目上下文和行为指南。

- The dynamic suffix that grows with every turn: user messages, assistant responses, tool outputs, and terminal observations.

- 随每一轮增长的动态后缀:用户消息、助手响应、工具输出和终端观察。

This split is what makes prompt caching possible. The infrastructure stores the mathematical state of the static prefix so that subsequent requests sharing that exact prefix can skip the computation entirely and read from memory.

这种拆分使得 prompt caching 成为可能。基础设施存储静态前缀的数学状态,以便后续共享完全相同前缀的请求可以完全跳过计算并从内存中读取。

Once you internalize this, every ar...